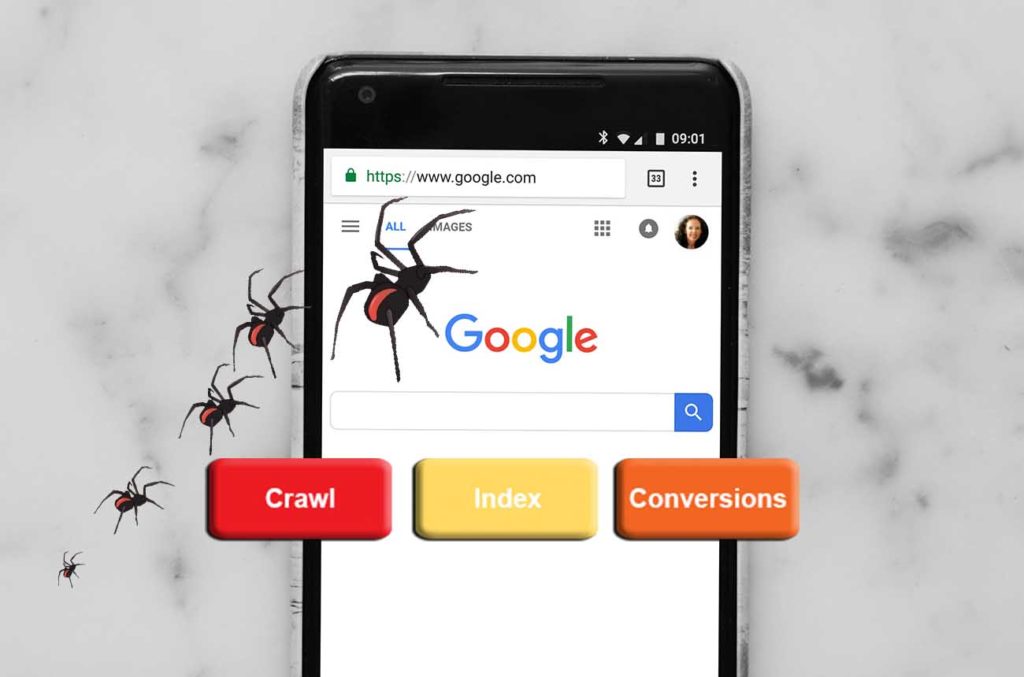

How are web crawlers indexed

The index is where your discovered pages are stored. After a crawler finds a page, the search engine renders it just like a browser would. In the process of doing so, the search engine analyzes that page's contents. All of that information is stored in its index.

What file does the web crawler look at

robots.txt file

A robots.txt file tells search engine crawlers which URLs the crawler can access on your site. This is used mainly to avoid overloading your site with requests; it is not a mechanism for keeping a web page out of Google.

What is the difference between crawling and indexing a website

What is the difference between crawling and indexing Crawling is the discovery of pages and links that lead to more pages. Indexing is storing, analyzing, and organizing the content and connections between pages. There are parts of indexing that help inform how a search engine crawls.

Are web crawlers used to automatically read through the contents of web sites also known as spiders

Web crawlers are computer programs that browse the Internet methodically and automatedly. They are also known as robots, ants, or spiders. Crawlers visit websites and read their pages and other information to create entries for a search engine's index.

How do web crawlers rank websites

Indexing starts by analyzing website data, including written content, images, videos, and technical site structure. Google is looking for positive and negative ranking signals such as keywords and website freshness to try and understand what any page they crawled is all about.

Do websites block web crawlers

Web pages detect web crawlers and web scraping tools by checking their IP addresses, user agents, browser parameters, and general behavior. If the website finds it suspicious, you receive CAPTCHAs and then eventually your requests get blocked since your crawler is detected.

How do web crawlers find URLs

Because it is not possible to know how many total webpages there are on the Internet, web crawler bots start from a seed, or a list of known URLs. They crawl the webpages at those URLs first. As they crawl those webpages, they will find hyperlinks to other URLs, and they add those to the list of pages to crawl next.

How are Web pages indexed

Website indexation is the process by which a search engine adds web content to its index. This is done by “crawling” webpages for keywords, metadata, and related signals that tell search engines if and where to rank content. Indexed websites should have a navigable, findable, and clearly understood content strategy.

Is Web scraping same as web crawling

Web scraping aims to extract the data on web pages, and web crawling purposes to index and find web pages. Web crawling involves following links permanently based on hyperlinks. In comparison, web scraping implies writing a program computing that can stealthily collect data from several websites.

Is Google a web crawler or web scraper

Google Search is a fully-automated search engine that uses software known as web crawlers that explore the web regularly to find pages to add to our index.

How do web crawlers discover URLs

Crawlers discover new pages by re-crawling existing pages they already know about, then extracting the links to other pages to find new URLs. These new URLs are added to the crawl queue so that they can be downloaded at a later date.

Can websites detect web scraping

If fingerprinting is enabled, the system uses browser attributes to help with detecting web scraping. If using fingerprinting with suspicious clients set to alarm and block, the system collects browser attributes and blocks suspicious requests using information obtained by fingerprinting.

Do websites prevent web scraping

Many websites on the web do not have any anti-scraping mechanism but some of the websites do block scrapers because they do not believe in open data access.

How do I know if a URL is crawlable

Enter the URL of the page or image to test and click Test URL. In the results, expand the "Crawl" section. You should see the following results: Crawl allowed – Should be "Yes".

Do 404 pages get indexed

There are also many status codes referring to possible errors, because of which the server cannot grant you access to a page. The 404 status code is one of them. It means that the page is not available because the server couldn't find it. Google doesn't index 404 pages because they present no value to users.

Are 404 pages indexed

If you have a page that has lots of high-quality links and results in a 404 error, you can still redirect that individual URL to a relevant page. Google doesn't index URLs with a 404 status, so your error page doesn't pass link juice.

Do all websites allow web scraping

There are websites, which allow scraping and there are some that don't. In order to check whether the website supports web scraping, you should append “/robots. txt” to the end of the URL of the website you are targeting. In such a case, you have to check on that special site dedicated to web scraping.

Is automated web scraping legal

United States: There are no federal laws against web scraping in the United States as long as the scraped data is publicly available and the scraping activity does not harm the website being scraped.

Is web crawler same as web scraping

The short answer. The short answer is that web scraping is about extracting data from one or more websites. While crawling is about finding or discovering URLs or links on the web. Usually, in web data extraction projects, you need to combine crawling and scraping.

Is web crawling Legal vs web scraping

Web scraping and crawling aren't illegal by themselves. After all, you could scrape or crawl your own website, without a hitch. Startups love it because it's a cheap and powerful way to gather data without the need for partnerships.

Can you get IP banned for web scraping

Having your IP address(es) banned as a web scraper is a pain. Websites blocking your IPs means you won't be able to collect data from them, and so it's important to any one who wants to collect web data at any kind of scale that you understand how to bypass IP Bans.

Do some websites not allow web scraping

Legal problem. There are websites, which allow scraping and there are some that don't. In order to check whether the website supports web scraping, you should append “/robots. txt” to the end of the URL of the website you are targeting.

Why some websites Cannot be scraped

For instance, some websites use heavy JavaScript or AJAX, which can make web scraping more challenging. Additionally, some websites may have anti-scraping mechanisms in place that prevent data extraction, such as captchas or IP blocking.

Has my website been crawled

To see if search engines like Google and Bing have indexed your site, enter "site:" followed by the URL of your domain. For example, "site:mystunningwebsite.com/". Note: By default, your homepage is indexed without the part after the "/" (known as the slug).

Does Google crawl 404 pages

404 crawling is a sign that Google has more than enough capacity to crawl more URLs from your site. 404 pages do not need to be blocked from crawling (for the purpose of preserving crawl budget).