Why SVM is better than other classifiers

Advantages. SVM Classifiers offer good accuracy and perform faster prediction compared to Naïve Bayes algorithm. They also use less memory because they use a subset of training points in the decision phase. SVM works well with a clear margin of separation and with high dimensional space.

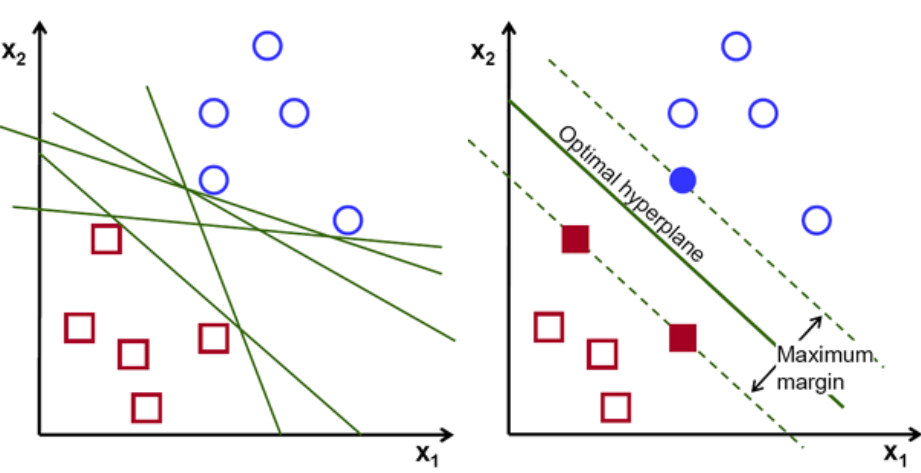

How is SVM different from other classifiers

SVMs are different from other classification algorithms because of the way they choose the decision boundary that maximizes the distance from the nearest data points of all the classes.

Why is SVM more accurate

In SVM, the data is classified into two classes and the hyper plane lies between those two classes. The advantage of SVM is that it also considers data being close to the opposite class and thus gives a reliable classification.

Why SVM is more suitable for text classification

SVM generalizes well in high dimensional spaces like those corresponding to texts. It is effective with more dimensions than samples. It works well when classes are well separated. SVM is a binary model in its conception, although it could be applied to classifying multiple classes with very good results.

Why do we prefer SVM over neural networks

We know that neural networks require significantly more time to train over a given dataset, with comparison to SVMs. Since, in this case, time is of the essence, our best bet is to use a support vector machine.

Why is SVM more accurate than KNN

KNN vs SVM :

SVM take cares of outliers better than KNN. If training data is much larger than no. of features(m>>n), KNN is better than SVM. SVM outperforms KNN when there are large features and lesser training data.

What is the advantage of SVM

The advantages of SVM and support vector regression include that they can be used to avoid the difficulties of using linear functions in the high-dimensional feature space, and the optimization problem is transformed into dual convex quadratic programs.

Why SVM performs better than random forest

SVM gives you distance to the boundary, you still need to convert it to probability somehow if you need probability. For those problems, where SVM applies, it generally performs better than Random Forest. SVM gives you "support vectors", that is points in each class closest to the boundary between classes.

Is SVM better than neural networks

For most modern problems DNNs are a better choice. If your input data size is small and you are successful in finding a suitable kernel, however, an SVM may be a more efficient solution. But, if you can't determine a suitable kernel, NNs are then a better choice.

Why SVM is better than decision tree

SVM works better with large amount of data where there is more input training data. It can also fit any data changes because of n-dimensional classification. Easy to scale to large datasets. It is powerful in learning complicated rules and efficient in performance.

Why is SVM better than CNN

Classification Accuracy of SVM and CNN In this study, it is shown that SVM overcomes CNN, where it gives best results in classification, the accuracy in PCA- band the SVM linear 97.44%, SVM-RBF 98.84% and the CNN 94.01%, But in the all bands just have accuracy for SVM-linear 96.35% due to the big data hyperspectral …

Why is SVM best for image classification

The main advantage of SVM is that it can be used for both classification and regression problems. SVM draws a decision boundary which is a hyperplane between any two classes in order to separate them or classify them. SVM also used in Object Detection and image classification.

Is SVM better for classification or regression

SVM tries to finds the “best” margin (distance between the line and the support vectors) that separates the classes and this reduces the risk of error on the data, while logistic regression does not, instead it can have different decision boundaries with different weights that are near the optimal point.

Why SVM is better than linear regression

A. SVM regression or Support Vector Regression (SVR) is a machine learning algorithm used for regression analysis. It is different from traditional linear regression methods as it finds a hyperplane that best fits the data points in a continuous space, instead of fitting a line to the data points.

Why SVMs are more accurate than logistic regression

Unlike logistic regression, SVMs are designed to generate more complex decision boundaries. An LS-SVM with a simple linear kernel function corresponds to a linear decision boundary. Instead of a linear kernel, more complex kernel functions, such as the commonly used RBF kernel, can be chosen.

Why SVM is better than random forest

Model accuracy by SVM classifier. It is because in this dataset, data is sparse and easy to classify, hence SVM works faster and provides better results. However, random forest also gives good results but does not match upto SVM for this particular dataset. The choice of algorithm depends upon the desired outcome.

Is support vector machine better than logistic regression

Linear SVMs and logistic regression generally perform comparably in practice. Use SVM with a nonlinear kernel if you have reason to believe your data won't be linearly separable (or you need to be more robust to outliers than LR will normally tolerate).

Why SVM is more accurate than logistic regression

SVM tries to finds the “best” margin (distance between the line and the support vectors) that separates the classes and this reduces the risk of error on the data, while logistic regression does not, instead it can have different decision boundaries with different weights that are near the optimal point.